Fake News: Why Climate Science Communication is Failing on YouTube

Albright’s map of the news and information ecosystem shows how rightwing sites are dominating sites like YouTube and Google, bound tightly together by millions of links.

Last year an article in The Guardian about Cambridge Analytica and Robert Mercer, noted:

As, it turns out, the liberal media is now. We are scattered, separate, squabbling among ourselves and being picked off like targets in a shooting gallery. Increasingly, there’s a sense that we are talking to ourselves. And whether it’s Mercer’s millions or other factors, Jonathan Albright’s map of the news and information ecosystem shows how rightwing sites are dominating sites like YouTube and Google, bound tightly together by millions of links. Is there a central intelligence to that, I ask Albright? “There has to be. There has to be some type of coordination. You can see from looking at the map, from the architecture of the system, that this is not accidental. It’s clearly being led by money and politics.”

Related: The Koch intelligence agency

Further …

“Look at this,” he says and shows me how, before the US election, hundreds upon hundreds of websites were set up to blast out just a few links, articles that were all pro-Trump. “This is being done by people who understand information structure, who are bulk buying domain names and then using automation to blast out a certain message. To make Trump look like he’s a consensus.” And that requires money? “That requires organisation and money. And if you use enough of them, of bots and people, and cleverly link them together, you are what’s legitimate. You are creating truth.”

But let’s go back a little further, to an article Joe Romm from Climate Progress posted back in 2011, Denier-bots live! Why are online comments’ sections over-run by the anti-science, pro-pollution crowd?

Romm pointed to leaked emails from a company called HBGary, and wrote,

..in some of the emails, HB Gary people are talking about creating “personas”, what we would call sockpuppets.

One of the emails went into details.

To build this capability we will create a set of personas on twitter,” “¬blogs,” “¬forums,” “¬buzz,” “¬and myspace under created names that fit the profile” (“¬satellitejockey,” “¬hack3rman,” “¬etc”)”¬.” “¬These accounts are maintained and updated automatically through RSS feeds,” “¬retweets,” “¬and linking together social media commenting between platforms.” “¬With a pool of these accounts to choose from,” “¬once you have a real name persona you create a Facebook and LinkedIn account using the given name,” “¬lock those accounts down and link these accounts to a selected” “¬#” “¬of previously created social media accounts,” “¬automatically pre-aging the real accounts.

Using the assigned social media accounts we can automate the posting of content that is relevant to the persona. In this case there are specific social media strategy website RSS feeds we can subscribe to and then repost content on twitter with the appropriate hashtags. In fact using hashtags and gaming some location based check-in services we can make it appear as if a persona was actually at a conference and introduce himself/herself to key individuals as part of the exercise, as one example. There are a variety of social media tricks we can use to add a level of realness to all fictitious personas.

This kind of manipulative activity behind many of the comments made at articles, or videos – throughout the internet should come to no surprise. Hence, why moderating comment sections has become a requirement to prevent distractions, controversy, confusion, ad hominem. Basic community guidelines have been abandoned since Web 2.0, which begun in the late 2010s, once automation and the organization and funding became more sophisticated. Before that communities usually flocked to forums or bulletin board systems, which were usually self-moderated, interrupted sometimes through good faith trolling, if at all an issue. Today all this has been replaced by fake news, fake comments, fake persona, and climate denial is no longer different as it applies the same instruments.

This month an article in The New York Times titled, YouTube, the Great Radicalizer, looked at YouTube’s video recommendations, and found:

What keeps people glued to YouTube? Its algorithm seems to have concluded that people are drawn to content that is more extreme than what they started with — or to incendiary content in general.

While climate denial at YouTube became rampant around 2007, with literally thousands of videos on themes ranging from Al Gore, Climategate, It’s the Sun, or hoax theories – classic denial, in the past few years the major denial players have changed their narrative to the next Ice Age is near (Grand Solar Minimum), or some concluding that it is too late to do anything anyway.

Additional deniers have focused their efforts to build-up their own YouTube channels, filled with fake science, fake conclusions, fake subscribers, fake viewers. With all the recent talk in the mainstream media about fake news, somehow climate denial – fake science conclusions, have been ignored.

If you watch a controversial YouTube video, be it about Alex Jones latest conspiracy, or how climate change is a scam, user votes are usually positive. Generally this is normal, since people usually consume to satisfy their belief system, called confirmation bias. Then add in fake views, and fake upvotes, and the fake impression is perfect. Another indicator for organized fake activity is that users who consume climate denial content are very active on other channels – once a video starts trending. They usually begin down voting en mass, and commenting.

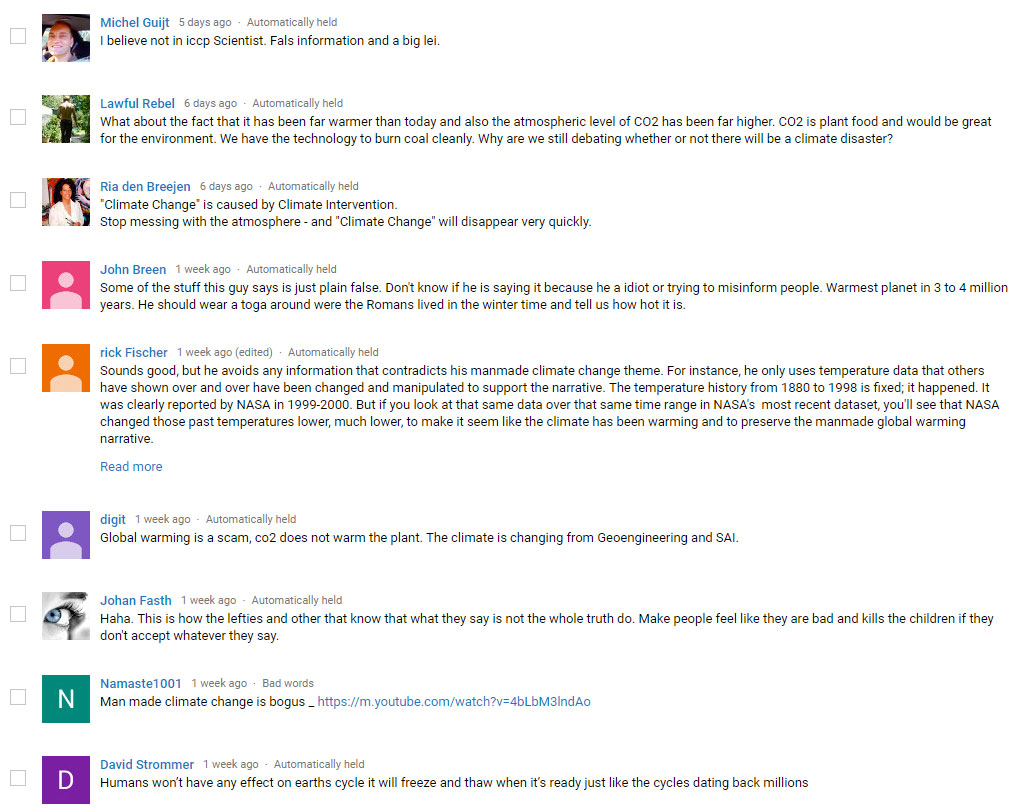

For instance, happened with this video

The video has now over 350 comments in moderation, after we switched the comment system to publish approved comments only. Thus, climate deniers are left with down voting.

When YouTube recommends content to its users it should be content not deemed too controversial. Climate change already is wrecking havoc on so many lives around the globe, and this trend will only get worse in the future, with some estimates that hundreds of millions of people are at risk. YouTube should enforce the same rigorous steps for climate denial – fake science, it took to remove other controversial content.

To get an idea how flawed YouTube’s recommendation system is, visit the website AlgoTransparency.org, it lists recommendations, for such topics as global warming.

Compared to YouTube, Facebook acted, banned accounts related to fake news, and recently banned Cambridge Analytica. Why has YouTube such a hard time to do the same? It shouldn’t be too hard to remove climate denier content from recommended and trending videos.

Related

About the Author: CLIMATE STATE

POPULAR

COMMENTS

- The risk with the path to a hothouse Earth | Climate State on Climate Tipping Points Existential Threat to Our Life Support Systems

- Robert Schreib on Electricity generation prices may increase by as much as 50% if only based on coal and gas

- Robert Schreib on China made a historic commitment to reduce its emissions of greenhouse gases

- Lee Nikki on COP30: Climate Summit 2025 – Intro Climate Action Event

- Hollie Bailey on Leaders doubled down on fossil fuels after promising to reduce climate pollution

We as a species, are in the unique situation of not only being able to witness, but also being the cause of our own extinction !